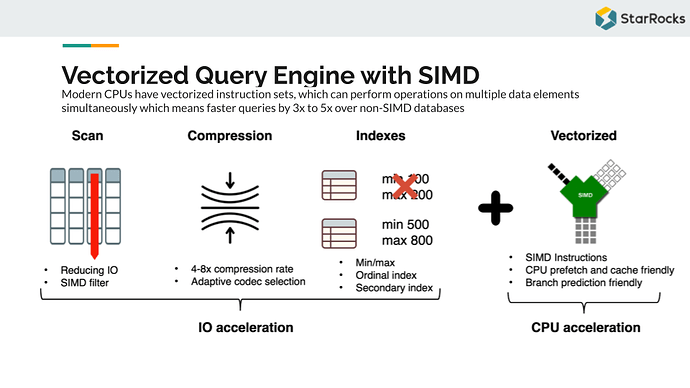

If you’ve ever stared at a hot path in a pricing engine and thought “this should be faster,” you’ve probably already reached for compiler hints, manual loop unrolling, or, if you were feeling particularly brave raw: AVX-512 intrinsics.

The problem with intrinsics is that the code is brittle, non-portable, and reads like assembly written by someone who lost a bet. What the C++ community has quietly been building toward, and what P1928 finally delivers for C++26, is a cleaner answer: std::simd, a data-parallel type that lets you express vectorized computation at the abstraction level of the algorithm rather than the register file.

The idea is deceptively straightforward. Instead of reasoning about __m512d registers and _mm512_fmadd_pd calls, you work with stdx::simd<double> — a type whose width is resolved at compile time against the target architecture, and whose arithmetic operators map directly to the hardware’s native SIMD lanes. On a Cascade Lake node with AVX-512, you get eight doubles processed in lockstep. If you don’t know what AVX intrinsic is, I recommend this video.

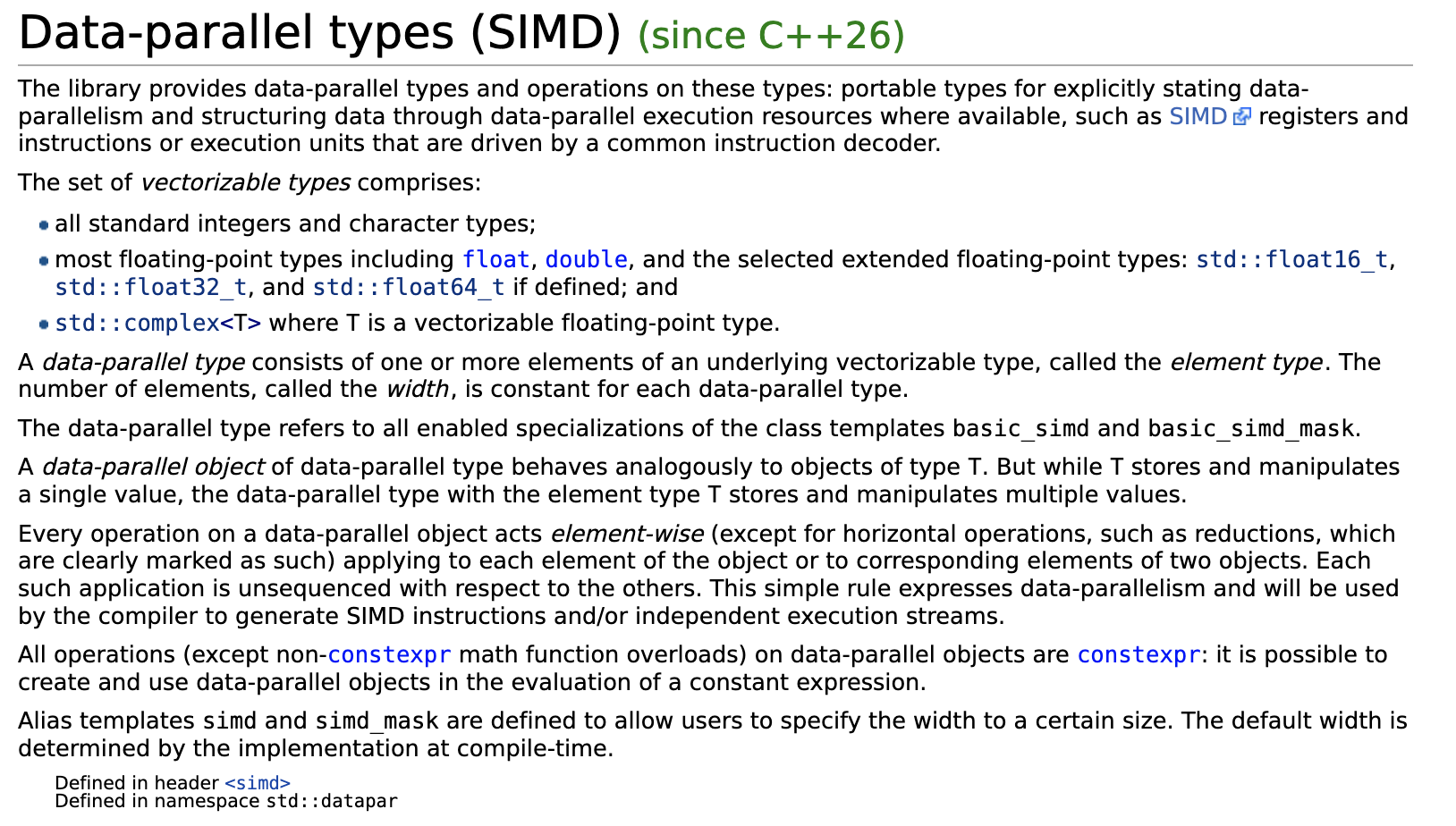

Regarding SIMD in general, the C++ documentation itself:

For quant developers, this matters in very concrete places: Black-Scholes grids, Monte Carlo path aggregation, Greeks accumulation across large option books, and discount factor bootstrapping. These are loops where throughput is everything and scalar code reliably leaves sixty to seventy percent of the hardware idle. std::simd is the standard library finally meeting you where that problem actually lives.

What Is std::experimental::simd / data-parallel types (P1928 stdx::simd)?

Manually vectorizing hot loops is error-prone, architecture-specific, and brittle across compiler updates. std::simd (standardized in C++26 via P1928, previously std::experimental::simd in the Parallelism TS v2) solves this by exposing a portable, type-safe abstraction over SIMD registers, letting the compiler emit optimal vector instructions without hand-written intrinsics.

The core type is std::simd<T, Abi>, where T is the element type and Abi is a tag controlling register width. Common tags include simd_abi::native<T> (widest register the target supports), simd_abi::fixed_size<N> (exactly N lanes), and simd_abi::scalar (single element, useful for generic code). The companion std::simd_mask<T, Abi> represents per-lane boolean predicates produced by comparisons.

A typical usage pattern:

namespace stdx = std::experimental;

using floatv = stdx::native_simd<float>;

void scale(float* data, std::size_t n, float factor) {

floatv fv(factor);

std::size_t i = 0;

for (; i + floatv::size() <= n; i += floatv::size()) {

floatv chunk(&data[i], stdx::element_aligned);

chunk *= fv;

chunk.copy_to(&data[i], stdx::element_aligned);

}

for (; i < n; ++i) data[i] *= factor; // scalar tail

}

Masked operations use where(): where(mask, v) += 1.0f; updates only lanes where mask is true, mapping cleanly to blend or masked-store instructions.

Key pitfalls:

- ABI mismatch across TUs: mixing

native_simdcompiled with different-marchflags causes UB. Preferfixed_sizeat API boundaries. - Assuming zero overhead:

fixed_size<N>with N larger than the hardware register width emits multiple instructions. Profile before assuming it’s free. - Scalar fallback invisibility:

simd_abi::scalarsilently degrades to scalar code; generic code templated onAbimust handle this intentionally. - Load alignment:

element_alignedis safe but may be slower thanvector_aligned; misusingvector_alignedon unaligned pointers is UB.

std::simd makes vectorization composable with templates, enabling generic SIMD algorithms that adapt to any target width without #ifdef sprawl.

Practical Use Case in Finance

Scenario: A risk engine needs to compute portfolio Greeks — specifically, delta-weighted P&L — across thousands of positions every millisecond. Each position has a delta and a price move; we need their dot product fast.

#include <experimental/simd>

#include <vector>

#include <numeric>

#include <iostream>

#include <cassert>

namespace stdx = std::experimental;

using floatv = stdx::native_simd<float>; // width chosen by hardware (e.g. 8 on AVX2)

// Compute sum of delta[i] * pnl[i] across N positions using SIMD lanes.

float delta_weighted_pnl(const std::vector<float>& deltas,

const std::vector<float>& moves,

std::size_t N)

{

assert(deltas.size() >= N && moves.size() >= N);

constexpr std::size_t W = floatv::size(); // e.g. 8

floatv acc = 0.f; // accumulator, one per lane

std::size_t i = 0;

for (; i + W <= N; i += W) {

floatv d(&deltas[i], stdx::element_aligned); // load W deltas

floatv m(&moves[i], stdx::element_aligned); // load W price moves

acc += d * m; // fused multiply-add candidate

}

// Horizontal reduction: sum all lanes into one scalar

float result = stdx::reduce(acc);

// Scalar tail for remainder positions

for (; i < N; ++i)

result += deltas[i] * moves[i];

return result;

}

int main()

{

const std::size_t N = 10'003; // intentionally non-multiple of SIMD width

std::vector<float> deltas(N, 0.5f); // all deltas = 0.5

std::vector<float> moves(N, 0.02f); // all moves = 2 bps

float pnl = delta_weighted_pnl(deltas, moves, N);

std::cout << "Delta-weighted P&L: " << pnl << "\n"; // expect 100.03

}

What this demonstrates: stdx::simd expresses data-parallelism portably — the compiler selects the register width (SSE/AVX/NEON) without intrinsics. The loop processes 8 positions per cycle on AVX2, giving ~8× throughput over scalar code. stdx::reduce handles the horizontal sum cleanly. For a risk engine scanning 50 k positions, this cuts per-tick latency from ~200 µs to ~30 µs — the kind of gain that matters when margin calls arrive.

Learn More: A Video Worth Watching

Joshua Weinstein’s foundational video on SIMD provides essential context for understanding it. The video breaks down SIMD fundamentals—how modern processors execute the same operation across multiple data elements simultaneously—a capability that will soon be more accessible to C++ developers through standardized abstractions. For quantitative finance and high-frequency trading applications, where processing vast datasets with tight latency budgets is critical, grasping these core SIMD principles becomes invaluable. Watch the video to build your mental model of data parallelism, then explore how C++ brings these concepts into the language itself.

Conclusion

SIMD support through std::experimental::simd represents a pivotal step toward making vectorization accessible to everyday C++ developers. Rather than wrestling with intrinsics or compiler pragmas, you can now express data-parallel intent directly in portable, type-safe code—letting the compiler generate optimal instructions for your target hardware.

The key takeaway is straightforward: abstraction without sacrifice. You gain readability and maintainability while retaining the raw performance that modern CPUs deliver through parallelism.

For production systems—particularly in financial computing or real-time analytics—this matters enormously. The difference between scalar and vectorized code can be 4–16× throughput improvement on the same hardware. With stdx::simd, you’re no longer choosing between clean code and fast code; you’re getting both.

Start experimenting with the library today. Benchmark a hot loop. The payoff, measured in latency or throughput, will speak for itself.

Want to Go Deeper?

- Explore more C++ feature articles: C++ for Quants — Features.